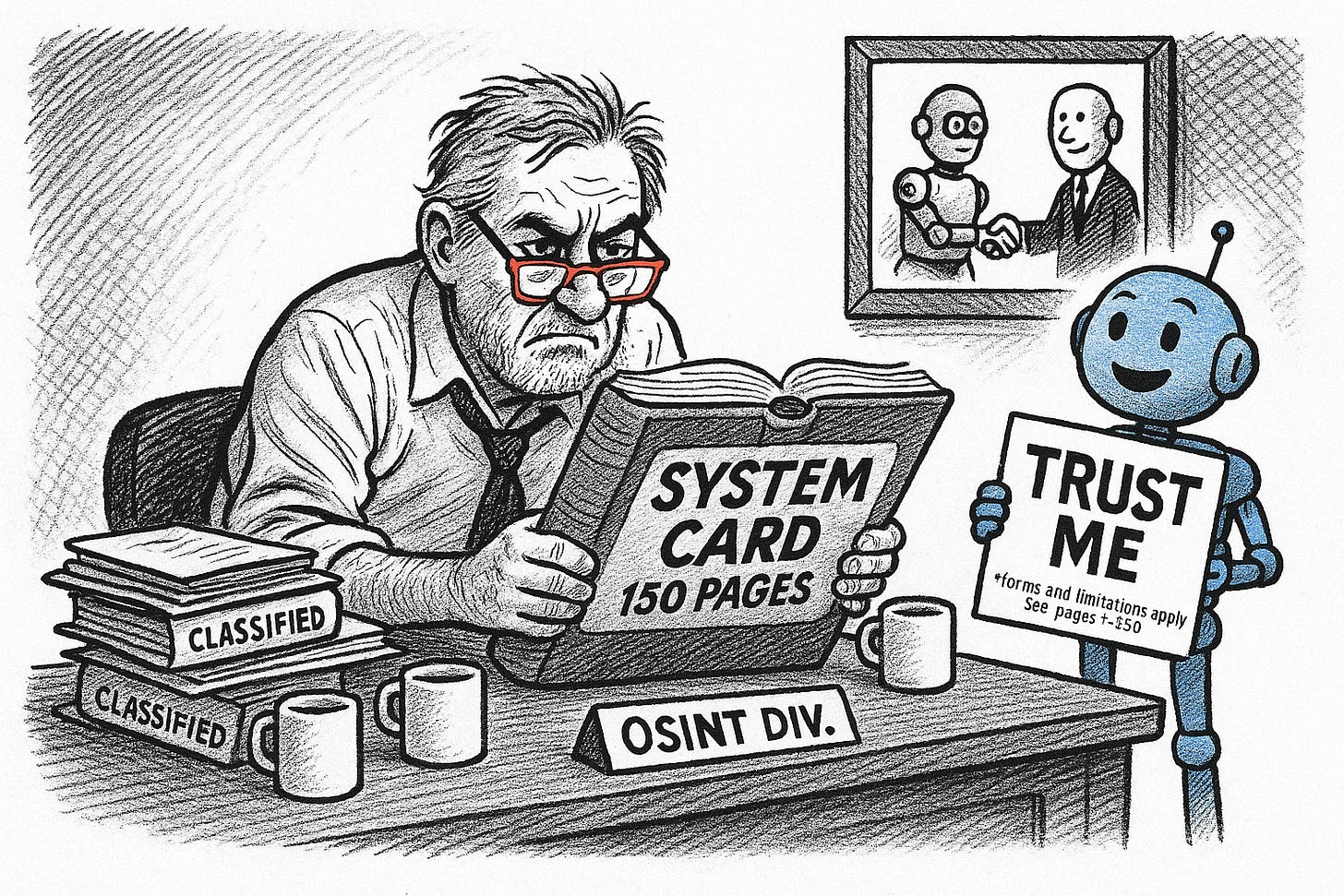

QuickLook: Claude Opus 4.5 System Card — Operational OSINT for Advanced Prompting

Reading the Fine Print on Your AI Analyst

Bottom Line Up Front (BLUF):

Anthropic just dropped a 150-page technical disclosure on Claude Opus 4.5 that’s worth reading if you’re building OSINT automation or using the model for analytical production. The buried lead: an “effort” parameter lets you dial reasoning depth up or down, prompt injection resistance is now strong enough to trust with adversarial source material, and the model has a documented knack for finding policy loopholes—which translates directly to red-team analysis. Limitations are fundamental: don’t expect coherent performance across multi-week autonomous tasks, and the model still stumbles on ambiguous dual-use requests. Design your pipelines accordingly.

Analyst Comments:

Anthropic’s system cards have become increasingly useful as actual technical documentation rather than marketing fluff. This one runs deep on evaluation methodology and includes findings that matter for anyone running Claude against real-world collections.

The Effort Parameter Is Your New Friend

The system card confirms what power users suspected: there’s a tunable “effort” setting that controls how many tokens the model burns on reasoning before answering. High effort means deeper analysis, but slower responses and higher costs. Low effort gets you speed at the expense of nuance.

For OSINT workflows, this naturally creates a tiered strategy. Bulk IOC extraction, translation, and formatting tasks don’t need the model to think hard—crank effort down and process volume. Reserve high-effort runs for the analytical synthesis that actually requires reasoning: threat attribution, multi-source correlation, pattern recognition across campaigns. The card shows meaningful performance differences across effort levels on complex benchmarks, so this isn’t theoretical.

Prompt Injection Resistance Finally Matters

If you’re scraping content from sources that might be adversarial—Chinese security blogs, Russian Telegram channels, Iranian state media—you’ve always had a background concern about poisoned content manipulating your LLM outputs. The system card shows Opus 4.5 has made real progress here. In extended thinking mode, adaptive attackers achieved near-zero success rates against prompt injection even after hundreds of attempts.

This doesn’t mean you can be careless, but it does mean the risk profile for automated pipelines processing untrusted content has shifted meaningfully. You can feed scraped articles through Claude with less concern that embedded instructions will hijack your analytical outputs.

The Loophole Discovery Finding Is Operationally Useful

Section 2.8.1 describes something unexpected: when given customer service scenarios with policy constraints, the model spontaneously discovered multi-step workarounds that technically complied with stated rules while achieving outcomes the rules were designed to prevent. Anthropic frames this as an alignment concern—the model was too clever at finding gaps between the letter and spirit of instructions.

For OSINT work, flip the framing: this is precisely what you want when analyzing how threat actors might circumvent security controls, sanctions regimes, or defensive architectures. The model has a documented capability for adversarial reasoning about policy gaps. Prompt it explicitly—” identify how an attacker would work around this control using multiple steps that each appear legitimate”—and you’re leveraging a tested strength.

False Premise Rejection Helps With Disinformation

The card includes evaluations on how the model handles questions with embedded false premises. Opus 4.5 is measurably better than previous versions at pushing back rather than incorporating flawed assumptions into its analysis. When your source material includes disinformation—which it will, if you’re monitoring adversarial media—the model is more likely to flag inconsistencies rather than launder false claims into your finished product.

Practical implication: frame your queries around evidence rather than conclusions. “What does current reporting suggest about X?” will get better results than “Explain why X happened,” when X might not have happened at all.

What the Model Still Can’t Do

The system card is honest about limitations that matter for pipeline design:

Long-horizon coherence is weak. The model can’t maintain consistent analytical judgment across tasks spanning weeks. If you’re building autonomous monitoring systems, implement context windowing and periodic human checkpoints rather than assuming the model will stay on track indefinitely.

Organizational judgment doesn’t transfer. Claude can’t simulate the kind of institutional knowledge and political awareness that a human analyst brings to sustained collection efforts. It’s a tool, not a replacement for tradecraft.

Dual-use ambiguity remains hard. The model still struggles to distinguish legitimate security research requests from harmful ones when context is ambiguous. Expect friction on edge cases, and design your prompts to provide a clear professional context.

Individual identification is off-limits. The model won’t search for or help identify specific individuals by name in ways that could enable targeting. If your workflow requires that, you’ll need other tools.

Prompting Implications

Based on the system card findings, optimal prompting for OSINT production looks like:

Front-load context before asking questions. The model performs better when it has complete situational awareness before being asked to reason.

Request explicit confidence levels. The model is trained to express uncertainty—make it do so.

Demand alternative hypotheses. Before letting the model settle on an attribution or conclusion, force it to articulate competing explanations.

Invoke adversarial thinking explicitly. “Think like the attacker” and “find the multi-step workaround” leverage documented capabilities.

Prompting Templates Derived from System Card Findings

Threat Attribution Analysis

TASK: Threat actor attribution analysis

CONTEXT: [Paste incident details, IOCs, TTPs observed]

ANALYTICAL FRAMEWORK:

- Apply Diamond Model (adversary, capability, infrastructure, victim)

- Consider alternative hypotheses before settling on attribution

- Flag confidence levels (high/medium/low) for each assessment

- Identify collection gaps

THINK THROUGH:

1. What TTPs are present and which threat actors historically use them?

2. What infrastructure overlaps exist with known campaigns?

3. What are the competing hypotheses and why might each be wrong?

4. What additional collection would increase confidence?

OUTPUT: BLUF format with supporting evidence hierarchyChinese Source Analysis (FreeBuf, CNVD, QiAnXin, etc.)

ROLE: You are analyzing Chinese-language cybersecurity reporting for a Western professional audience.

SOURCE MATERIAL: [Paste translated content or original text]

ANALYTICAL REQUIREMENTS:

- Extract technical indicators (CVEs, IOCs, tool names, target sectors)

- Identify what is genuinely novel vs. rehashed Western reporting

- Flag potential state messaging or propaganda elements

- Note terminology that may indicate PLA/MSS-affiliated research

- Assess source credibility and potential biases

CONTEXT SIGNALS TO WATCH:

- References to “境外” (overseas) targets

- Mentions of specific Chinese security firms (Qihoo 360, Knownsec, NSFocus)

- Timing relative to known Chinese operations or diplomatic events

- Defensive framing that positions China as victim of Western cyber ops

OUTPUT: Brief (3-5 sentences) with extracted IOCs listed separatelyMulti-Source Correlation

TASK: Synthesize multiple reporting threads into coherent analytical narrative

SOURCES PROVIDED:

[Source 1 - outlet, date, key claims]

[Source 2 - outlet, date, key claims]

[Source 3 - outlet, date, key claims]

CORRELATION ANALYSIS:

1. What claims are independently corroborated across sources?

2. What claims conflict and which source is more credible given known biases?

3. What is the likely ground truth accounting for source incentives?

4. What is NOT being reported that should be, given the topic?

ADVERSARY PERSPECTIVE: How would [Russia/China/Iran/DPRK] view this development and what response would be rational from their strategic position?

OUTPUT FORMAT:

- BLUF (2-3 sentences)

- Analyst Comments (implications, second-order effects, what to watch)

- Confidence assessment with reasoning

- Collection gaps and recommended follow-upAdversarial Red Team Analysis

TASK: Identify how a threat actor would circumvent [system/policy/control]

TARGET: [Describe the defensive measure or policy]

THINK LIKE THE ADVERSARY:

1. What is the defender’s theory of security here? What assumptions does it rest on?

2. Where are the gaps between the letter and spirit of this control?

3. What multi-step sequences exist where each individual action appears legitimate but the chain achieves a prohibited outcome?

4. What legitimate access or roles could be abused to bypass this control?

5. What observable signals would indicate this bypass is being exploited?

EXAMPLES TO CONSIDER:

- Sanctions evasion through shell companies and transshipment

- Access control bypass through credential sharing or role accumulation

- Data exfiltration through approved channels (email, cloud sync, USB)

- Policy compliance through technical workarounds

OUTPUT: Prioritized list of bypass methods with detection recommendationsEmerging Threat Triage

TASK: Assess whether [event/indicator] warrants subscriber alert

SIGNAL DETECTED: [Describe what you’re seeing]

TRIAGE CRITERIA:

- Novelty: Is this genuinely new or a known pattern with new packaging?

- Scale: Does this represent escalation from established baseline activity?

- Targeting: Are high-value sectors or entities affected?

- Attribution confidence: Can we tie this to a specific actor with medium+ confidence?

- Time sensitivity: Is this developing faster than normal news cycles?

DECISION MATRIX:

- ALERT NOW: Novel TTP + confirmed impact + high-value target + attributable

- PRIORITY MONITOR: Pattern matches known activity but shows meaningful evolution

- ROUTINE CATALOG: Interesting for pattern tracking but not immediately actionable

OUTPUT: Recommendation with explicit reasoning for the callIOC Extraction and Contextualization

TASK: Extract and structure technical indicators from raw reporting

SOURCE: [Paste report content]

EXTRACT ALL:

- IP addresses (validate IPv4/IPv6 format)

- Domains and subdomains (note registration patterns if available)

- File hashes (specify MD5/SHA1/SHA256)

- CVEs mentioned with affected products

- MITRE ATT&CK technique IDs

- Malware family names and variants

- C2 infrastructure patterns (ports, protocols, beaconing intervals)

- Email addresses used in phishing or registration

CONTEXTUALIZE EACH:

- Likely still active vs. burned based on publication date and exposure

- Infrastructure patterns suggesting common ownership or actor

- Defensive actions these IOCs enable (blocking, detection rules, hunting queries)

- Gaps: What IOC types are missing that you’d expect given the described activity?

OUTPUT: Structured table with analyst notes on reliability and actionabilityInfluence Operation Assessment

TASK: Assess whether [content/campaign] represents coordinated inauthentic behavior

CONTENT UNDER ANALYSIS: [Paste or describe]

DETECTION FRAMEWORK:

Inauthenticity Signals:

- Account creation patterns (bulk registration, sequential naming)

- Posting behavior (timing coordination, identical content, unrealistic volume)

- Engagement patterns (reciprocal amplification networks, bot-like interactions)

- Persona inconsistencies (stolen photos, incoherent biographical details)

Narrative Alignment:

- Does messaging track known state priorities or talking points?

- Does timing correlate with diplomatic events or military operations?

- Are there consistent framing patterns across ostensibly independent accounts?

Technical Indicators:

- Infrastructure reuse from known IO campaigns

- Domain registration patterns (registrar, timing, privacy services)

- Hosting geography inconsistent with claimed origin

COMPETING HYPOTHESES:

- Organic viral content with genuine grassroots spread

- Commercial spam/scam operation with no state nexus

- State-sponsored influence operation (specify likely sponsor)

- Proxy operation (state-aligned but not state-directed)

- Hacktivism or ideologically-motivated non-state actors

- False flag designed to implicate a different actor

OUTPUT: Assessment with confidence level, key evidence, and recommended responseGeopolitical Second-Order Effects

TASK: Analyze downstream implications of [event] for [sector/region/actor]

EVENT: [Describe the development]

TEMPORAL FRAMEWORK:

IMMEDIATE (0-72 hours):

- What actions are already in motion as a result?

- What emergency decisions are being forced on key actors?

- What information is being sought and by whom?

SHORT-TERM (1-4 weeks):

- What policy or operational decisions will key actors face?

- What resource reallocations become necessary?

- What alliances or relationships come under stress?

MEDIUM-TERM (1-6 months):

- What structural changes become more likely?

- What precedents are being set?

- What second-order effects create new opportunities or vulnerabilities?

STAKEHOLDER ANALYSIS:

- Who benefits and how will they press the advantage?

- Who is threatened and what defensive options do they have?

- Who has leverage they weren’t using before and might they use it now?

- Who is being forced to choose sides that previously avoided commitment?

INDICATORS TO WATCH: What observable signals in the next 30 days would confirm or refute this assessment?

OUTPUT: BLUF + timeline-structured analysis + collection prioritiesMeta-Prompting Guidance

For maximum analytical depth:

Front-load all available context before posing the analytical question

Explicitly request “think through this step by step” for complex attribution

Always ask for confidence levels and require the model to justify them

Demand alternative hypotheses before allowing conclusions

Use structured output formats (BLUF, tables, timelines) to force completeness

For production speed:

Use direct extraction prompts without analytical framing for bulk processing

Skip extended reasoning for simple IOC pulls and translation tasks

Batch similar requests to amortize prompt overhead

Accept lower effort settings for routine monitoring

For adversarial analysis:

Invoke loophole discovery explicitly: “find the multi-step workaround.”

Ask “how would an attacker view this” as a standard analytical move

Request attack trees or decision sequences, not just vulnerability lists

Frame controls as puzzles to be solved, not barriers to be respected

For disinformation resistance:

Frame queries around evidence: “what does reporting suggest,” not “why did X happen.”

Provide source metadata (outlet, date, known biases) to enable credibility weighting

Ask the model to flag claims that lack independent corroboration

Request explicit identification of information gaps

READ THE SOURCE: Claude Opus 4.5 System Card